overview: why choose kt server in seoul, south korea for optimization (best/best/cheapest)

for the scenario where target users visit in seoul, south korea, this article will provide a comprehensive set of high-speed connection optimization tutorials, covering acceleration solutions from the "best" (enterprise-level dedicated line + hosting optimization), "the best" (cost-effective cloud service + network optimization) to the "cheapest" (low-cost vps + software-level optimization). whether you are pursuing the lowest latency, the most stable jitter control, or you are on a limited budget and want to achieve significant improvements at the lowest cost, this tutorial will provide operational steps and testing methods based on the characteristics of the kt server in seoul, south korea .

kt server network environment and common bottlenecks in seoul, south korea

before starting optimization, you need to understand the characteristics of kt 's backbone interconnection, exchange point (ix) and international export links as a major operator in south korea. common bottlenecks include bandwidth limitations on cross-border links, detours caused by bgp path selection, isp-side peak jitter, and server-side tcp/ip stack default configurations that are not optimized for high-latency links. the positioning of these bottlenecks is the basis for subsequent tuning.

testing and diagnostic tools and methods

it is recommended to use mtr , traceroute, iperf3 and tcpdump for end-to-end diagnosis. first, use mtr to determine the packet loss point and delay fluctuation, and then use iperf3 to measure the throughput limit; on complex issues, use tcpdump to capture packets and analyze behaviors such as three-way handshake, packet loss retransmission, and window scaling. record baseline data (latency, packet loss rate, bandwidth) to compare optimization effects.

routing and bgp optimization strategies

at the routing level, priority is given to multi-line access and reasonable bgp policies. for the "best" solution, it is recommended to use a dedicated line or hosting service directly connected to south korea, and to establish good peering with a local ix exchange (such as kix); for the "best" solution, choose a cloud or cdn provider with a direct pop connection to south korea; for the "cheapest" solution, reduce the number of hops and detours by purchasing a vps host in a nearby region or with good peering and optimizing bgp neighbors (or using a commercial vpn/accelerator).

transport layer and linux kernel tuning (practical guide)

making the following adjustments on the server side (usually linux) can significantly improve high-speed connection performance: enable window scaling (tcp_window_scaling), adjust the congestion control algorithm to bbr (if the system supports it), increase tcp_rmem/tcp_wmem, and optimize net.core.somaxconn and tcp_tw_reuse. comparing the sample command (sysctl write) with the test after restarting the network service is a must-do step.

tcp/udp optimization and modern protocols (http/2, quic)

using http/2 or udp-based quic (http/3) can reduce handshakes and improve concurrency efficiency, especially in high packet loss or high latency networks. if the application scenario is a web service or api, prioritize upgrading to a reverse proxy that supports quic (such as caddy, nginx with module, or cloudflare) to take advantage of faster connection establishment and multiplexing.

cdn, anycast and edge deployment

using a cdn or anycast service with nodes in seoul can move static content and caching logic to the user side, significantly reducing return-to-origin delays and link loads. when evaluating a cdn, focus on the hit rate of the seoul node, the back-to-origin strategy, and the real-time fallback mechanism. for dynamic content, consider edge computing or near-source processing to reduce cross-border round-trips.

application layer optimization and compression caching strategy

the application layer should enable gzip/brotli compression, merge/lazy loading of static resources, and set cache-control and etag appropriately. by reducing the time to first byte (ttfb) and the amount of transmitted data, the user-perceived speed can be improved under limited bandwidth. enable paging, compression, and rate control on the api interface to avoid instant concurrency filling the link.

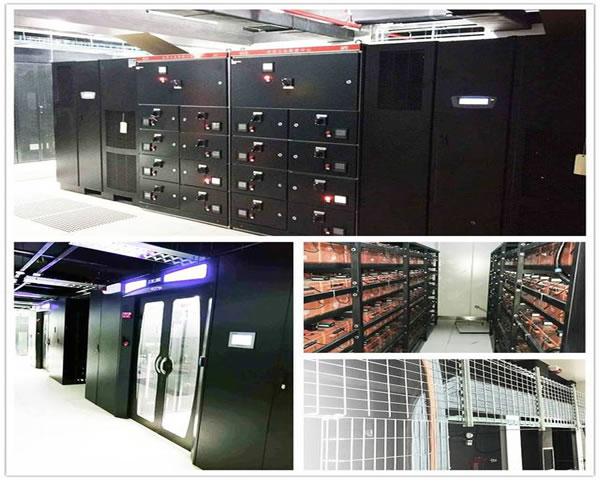

hardware and network interface optimization

for self-built computer rooms or dedicated line customers, choosing to support interrupt tuning (rss), tcp segmentation offload (tso), large receive offload (lro) and a larger mtu (such as 9000) can reduce cpu load and improve throughput. verify that intermediate devices (switches, firewalls) support and are correctly configured for mvlan, mtu consistency, and traffic shaping.

comparison of recommended solutions under different budgets

"best": enterprise-level dedicated line + seoul computer room hosting + local cdn + bgp optimization, with the lowest latency and strong stability; "best": choose a cloud service with seoul pop (or cloud hosted in kt computer room), combined with cdn and kernel optimization, high cost performance; "cheapest": adjust tcp parameters on a vps with good peering, use reverse proxy caching and free or low-price cdn, which can achieve significant improvements at the lowest cost.

deployment and regression testing process (step-by-step)

it is recommended to execute in the following order: 1) baseline testing and recording; 2) routing and bgp audit; 3) kernel and network interface tuning; 4) application layer compression/caching online; 5) introduction of cdn/anycast; 6) complete regression testing (mtr/iperf3/httpbench). documented results and recovery strategies at every step to ensure rollback and verifiable performance improvements.

frequently asked questions and troubleshooting suggestions

if you encounter jitter or intermittent packet loss, first determine whether there is a link problem (operator) or server-side congestion (cpu/network card). if you find that the cross-border link delay is high, try changing the outbound gateway or contact kt to obtain better peering. for the problem of slow https handshake, first check whether the certificate chain, ocsp, and tls configuration have modern suites enabled.

summary and implementation checklist

the key to optimizing kt servers in seoul, south korea is: rationally selecting access and cdn, optimizing routing and bgp, adjusting linux kernel and tcp parameters, adopting modern transmission protocols and continuous monitoring. implementation checklist: baseline testing → routing optimization → kernel tuning → protocol upgrade → cdn deployment → regression verification. implementing it according to the "best/best/cheapest" budget tiering can achieve the best user experience under different investments.

- Latest articles

- Budget Control Guides How To Open A Server In Singapore. Cost Estimation And Comparison Of Billing Models.

- Industry Cases Help Understand The Selection Ideas And Risks Of Hong Kong’s Native Ip And Broadcast Ip

- Guide For Small And Medium-sized Teams: Which Alibaba Cloud Hong Kong Vps Is More Suitable For The Budget And Needs Of Start-ups?

- How To Evaluate The Network Connectivity And Fault Recovery Capabilities Of Japanese Station Cluster Server Rooms

- Actual Performance Evaluation Of Malaysia Vps Cn2 Gia In Cross-border E-commerce And Live Broadcast Scenarios

- Analysis Of Us Amazon Vps Configuration And Acceleration Techniques Suitable For Small And Medium-sized Sellers

- High-speed Connection Optimization Tutorial For The Acceleration Solution Of Kt Server In Seoul, South Korea

- Overseas User Access Optimization Case And Practical Guide To Server Vps Deployment In Japan

- How To Improve The Winning Rate Of The Team Competition Through Korean Vps Private Nodes

- Platform Migration Strategy Vietnam Cn2 Vps Data Migration And Minimized Downtime Plan

- Popular tags

-

How Can Korean E-commerce Sites Attract More Customers?

discuss the strategies and techniques of how korean e-commerce sites can attract more customers by optimizing server configuration and website design. -

How To Develop A Long-term Maintenance Plan For Korean Station Groups To Improve Stability And Scalability

a long-term maintenance guide for the site group for the korean market, covering infrastructure, monitoring, backup, automation, content and seo maintenance frequency and team division, to help improve the stability and scalability of the site group. -

In-depth Analysis Of Common Causes And Response Strategies For Korean Server Crashes

in-depth analysis of common causes and response strategies for korean server crashes, and help users choose the right server solution.